Jun 10, 2012 Because by default, Hibernate will cache all the persisted objects in the session-level cache and ultimately your application would fall over with an OutOfMemoryException somewhere around the 50,000th row. You can resolve this problem if you are using batch processing with Hibernate.

The code itself and the Hibernate configuration look correct (by correct I mean that they follow the idiom from the documentation). But here are some additional suggestions: As already mentioned, make absolutely sure that you aren't using an ID generator that defeats batching like IDENTITY. When using GenerationType.AUTO, the persistence provider will pick an appropriate strategy depending on the database so, depending on your database, you might have to change that for a TABLE or SEQUENCE strategy (because Hibernate can cache the IDs using an hi-lo algorithm). Also make sure that Hibernate is batching as expected. To do so, activate logging and monitor the BatchingBatcher to track the size of the batch it's executing (will be logged). In your particular case, you might actually consider using (once the problem will be solved of course). A few things:.

Can you quantify 'excruciatingly slow'? How many inserts per second are you achieving? What rate do you think you should have instead? What type of load is the database itself under? Are others reading from the table at the same time?.

How are you connecting to the database? Is all of this occurring in a single transaction re-using the same connection?. Are you by any chance using an identity identifier? The documentations states that JDBC: Hibernate disables insert batching at the JDBC level transparently if you use an identity identifier generator. Thanks for the response, Matt. It looks like I am getting roughly 4 inserts per second. Can I expect to dramatically improve this?

My DAO objects contain a SessionFactory object which is wired in via Spring dependency injection. The SessionFactory class I'm using is org.springframework.orm.hibernate3.annotation.AnnotationSessionFactoryBean. The transaction manager class I'm using is org.springframework.orm.hibernate3.HibernateTransactionManager and I have enabled transactional behavior based on annotations in my Spring application context config file. – Aug 12 '10 at 16:27.

This will create 100000 records in STUDENT table. Hibernate batch processing is powerful but it has many pitfalls that developers must be aware of in order to use it properly and efficiently.

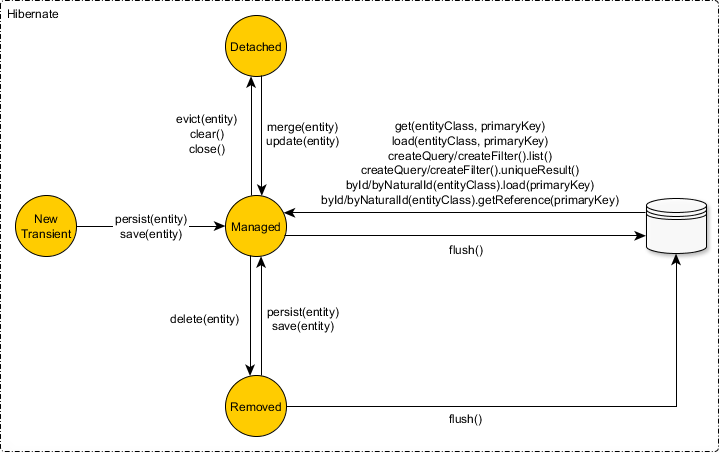

Most people who use batch probably find out about it by trying to perform a large operation and finding out the hard way why batching is needed. They run out of memory. Once this is resolved they assume that batching is working properly. The problem is that even if you are flushing your first level cache, you may not be batching your SQL statements. Hibernate flushes by default for the following reasons: 1.

Before some queries 2. When commit is executed 3. When session.flush is executed The thing to note here is that until the session is flushed, every persistent object is placed into the first level cache (your JVM’s memory).

So if you are iterating over a million objects you will have at least a million objects in memory. To avoid this problem you need to call the flush and then clear method on the session at regular intervals. Hibernate documentation recommends that you flush every n records where n is equal to the hibernate.jdbc.batchsize parameter. A Hibernate Batch example shows a trivial batch process.

There are two reasons for batching your hibernate database interactions. The first is to maintain a reasonable first level cache size so that you do not run out memory. The second is that you want to batch the inserts and updates so that they are executed efficiently by the database. The example above will accomplish the first goal but not the second. Student student = new Student; Address address = new Address; student.setName('DINESH RAJPUT'); address.setCity('DELHI'); student.setAddress(address); session.save(student); The problem is Hibernate looks at each SQL statement and checks to see if it is the same statement as the previously executed statement.

If they are and if it hasn’t reached the batchsize it will batch those two statements together using JDBC2 batch. However, if your statements look like the example above, hibernate will see alternating insert statements and will flush an individual insert statement for each record processed.

So 1 million new students would equal a total of 2 million insert statements in this case. This is extremely bad for performance. About The Author Dinesh Rajput is the chief editor of a website Dineshonjava, a technical blog dedicated to the Spring and Java technologies. It has a series of articles related to Java technologies. Dinesh has been a Spring enthusiast since 2008 and is a Pivotal Certified Spring Professional, an author of a book Spring 5 Design Pattern, and a blogger. He has more than 10 years of experience with different aspects of Spring and Java design and development.

His core expertise lies in the latest version of Spring Framework, Spring Boot, Spring Security, creating REST APIs, Microservice Architecture, Reactive Pattern, Spring AOP, Design Patterns, Struts, Hibernate, Web Services, Spring Batch, Cassandra, MongoDB, and Web Application Design and Architecture. He is currently working as a technology manager at a leading product and web development company. He worked as a developer and tech lead at the Bennett, Coleman & Co. Ltd and was the first developer in his previous company, Paytm. Dinesh is passionate about the latest Java technologies and loves to write technical blogs related to it.

He is a very active member of the Java and Spring community on different forums. When it comes to the Spring Framework and Java, Dinesh tops the list! Hey Dinesh,just had a look on your profile and it is quite amazing. I found you have experience on spring batch.

In my project I have worked on schedulers and these schedulers process a very large set of data from multiple tables. There are very complex queries written to read the data and after that data manipulation goes on and then write the data in some other table(in loops). So there is so much of processing by database engine.so if use spring batch, can load on database be decreased?

Waiting for answer.Please help.